Archive for March 3rd, 2009

From theory to practice: the personal desktop linux experiment

Posted by cdaffara in OSS business models, OSS data, blog on March 3rd, 2009

In my previous post, I tried to provide a simple theoretical introduction to the UTAUT technology adoption model, and the four main parameters that govern the probability of adoption; as a complement, I will present here a small demonstration on how to use the model to improve the adoption of a specific technology, that is the user-chosen personal computer with Linux as single operating system. The reason for the “user-chosen” is related to the different adoption processes for personal computers in a personal setting (for example hobbyist, student, micro-enterprise) versus the business or public authority environment- those will be discussed in a future post. The idea for this experiment came out of a workshop I held in Manila, where I had the pleasure to discuss with the technical manager of the largest PC chain in the Philippines about how to best introduce a Linux PC into the market.

To set the context: we will present an example of an optimization exercise for the take-up of a Linux-based PC, to be distributed and used mainly for personal purposes, and acquired through direct channels like large distribution networks, computer reseller chains, individual stores. The first important point is related to the market: there are really two separate transactions, the first one from the computer manufacturer to the chain and the second from the chain to the individual users. The two markets are distinct, and have widely differing properties:

- from manufacturer to chain: there is a small number of legal agreements (the reseller/redistribution agreements), the main driver for the manufacturer is to acquire volume, while the chain is interested in profit margins for the single sale and overall volume (how fast the stock can be moved), and sales of complimentary products

- from chain to users: there is a large number of very small transactions; the main driver for the chain is to get as much margin on aggregate sales as possible (think selling the PC, the printer and associated consumables) while the buyer is looking for a specific set of functionalities at a reasonable price, like being able to browse the web, using email, writing letters and such.

What is useful for the first transaction may be useless for the second; the fundamental idea is that both transactions have to happen and be sustainable for the market to be self-sustaining and long running (a short-running market may even be negative- if a chain start selling a model and discontinues it after a little while, the end users may believe that it is no longer sold because of defects or because it was not competitive. Think for a moment about the comments after WalMart stopped selling in store the very low cost Linux PC (that is still offered online); despite the fact that no real quality issues was found, most commentators associated the end of the experiment with a general failure for Linux PCs.(as for the source of the reference to quality issues, it is extracted from the Comes-vs-Microsoft documents related to WalMart: “We understand that there has not been a customer satisfaction issue. WalMart sets fairly strict standards for customer return rates and service calls”).

So, our optimization experiment need to satisfy two constraints: guarantee a margin (eventually compounded from accessory sales) on every sale, guarantee low inventory (fast turnaround), and this means that adopters should buy with a high probability after first or second sight. Let’s recall the four parameters for adoption, and apply them for the specific situation:

- performance expectancy: is this PC fast? is it able to perform the tasks that I need?

- effort expectancy: is it easy to use?

- social influence: is it appropriate for me to use? to be shown buying it? what my peers will say when I will show it?

- facilitating conditions: is there someone that will help me in using it? will it work with my network?

Let’s start with performance expectancy. Most “Linux pc” are really very low cost, substandard machines, assembled with the overall idea that price is the only sensitive point. In this sense, while true that Linux and open source allows for far greater customizability and speed it is usually impossible to compensate for extreme speed differences; this means that to be able to satisfy the majority of users, we cannot aim for “the lowest possible price”. A good estimate of the bill of materials is the median of the lowest quartile of the price span of current PC in the market (approximately, 10% to 20% more than the lowest price). After the hardware is selected, our suggestion is to use a standard linux distribution (like Ubuntu) and add to it any necessary component that will make it work out of the box. Why a standard distribution? Because this way users will have not only a potential community of peers to ask for help, but the cost of maintaining it will be spread – as an example, most tailor-made Linux distributions for NetBooks are not appealing because they employ old version of software packages. This provides an explanation of why Dell had so much success in selling Linux netbooks compared to other vendors, with one third of the netbooks sold with plain Ubuntu. Having a standard distribution reduces costs for the technology provider, provides a safety mechanism for the reseller chain (that is not dependent on a single company) and provides the economic advantage of a cost-less license, that increase the chain margin.

Effort expectancy: what is the real expectancy of the user? Where do the user obtains his informations from? The reality is that most potential adopters get their information from peers, magazines and in many cases from in-store exploration and talks with store clerks. The clear preference that most users demonstrate towards Windows really comes from a rational reasoning based on incomplete information: the user wants to use the PC to perform some activites, he knows that to perform such activities software is needed, he knows that Windows has lots of software, so Windows is a safe bet. The appearance of Apple OS X demonstrated that this reasoning can be modified, for example by presenting a nicer user experience; OS X owners get in contact with other potential adopters, are shown a different environment that seems to be capable of performing the most important talks, and so the diffusion process can happen. For the same process to be possible with Linux, we must improve the knowledge of users, to show them that normal use is no more intimidating than that of Windows, and that software is available for the most common tasks.

This requires two separate processes: one to show that the “basic” desktop is capable of performing traditional tasks easily, and another to show what kind of software is available. My favourite way for doing this for in-store experiences is through a demo video, usually played in continuous rotation, that shows some basic activities: for example, how Network Manager provides a simple, one-click way to connect to WiFi, or how Nautilus provides previews of common file formats. There should be a fast, 5-minute section to show that basic activities can be performed easily. I prefer the following list:

- web browsing (showing compatibility with sites like FaceBook, Hi5, Google Mail)

- changing desktop properties like backgrounds or colours

- connecting to WiFi networks

- printer recognition and setup

- package installation

I know that Ubuntu (or OpenSUSE, or Fedora) users will complain that those are functionalities that are nowadays taken for granted. But consider what even technical journalist sometimes may write about Linux: “It booted like a real OS, with the familiar GUI of Windows XP and its predecessors and of the Mac OS: icons for disks and folders, a standard menu structure, and built-in support for common hardware such as networks, printers, and DVD burners.”

Booted like a real OS. And - icons!

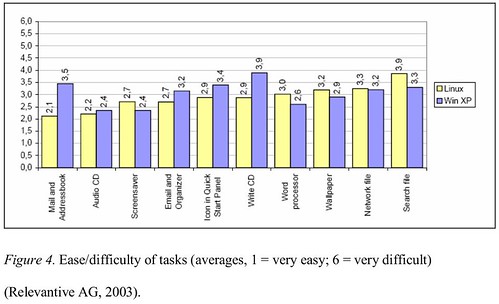

So much for the change in perspective, like the Vista user perception problem demonstrated. So, a pictorial presentation is a good media to provide an initial, fear-reducing informative presentation that will not require assistance from the shop staff. On the same side, a small informative session may be prepared (we suggested a 8-page booklet) for the assistants to provide answers comparable to that offered for Windows machines. Usability of modern linux distribution is actually good enough to be comparable to that of Windows XP on most tasks. In a thesis published in 2005, the following graph was presented, using data from previous work by Relevantive:

The time and difficulty of tasks was basically the same; most of the problems that were encountered by users were related to bad naming of the applications: “The main usability problems with the Linux desktop system were clarity of the icons and the naming of the applications. Applications did not include anything concerning their function in their name. This made it really hard for users to find the right application they were looking for.” This approach was substantially improved in recent desktop releases, adding a suffix to most applications (for example, “GIMP image editor” instead of “GIMP”). As an additional result, the following were the subjective questionnaire results:

- 87% of the Linux test participants enjoyed working with the test system (XP: 90%)

- 78% of the Linux test participants believed they would be able to deal with the new system quickly (XP: 80%).

- 80% of the Linux test participants said that they would need a maximum of one week to achieve the same competency as on their current system (XP: 85%).

- 92% of the Linux test participants rated the use of the computers as easy (XP: 95%).

This provides evidence than, when properly presented, a Linux desktop can provide a good end-user experience.

The other important part is related to applications: two to five screenshots for every major application will provide an initial perception that the machine is equally capable of performing the most common tasks; and equally important is the fact that such applications need to be pre-installed and ready to use. And with ready to use, I mean with all the potential enhancements that are available but not installed, like the extended GIMP plugin collection that is available under Ubuntu as gimp-plugin-registry, or the various thesauri and cliparts for OpenOffice.org. A similar activity may be performed with regards to games, that should be already installed and available for the end user. Some installers for the most requested games may be added using wine (through a pre-loader and installer like PlayOnLinux); we found that in recent Wine builds performance is quite good, and in general better than that of proprietary repackaging like Cedega.

One suggestion that we added is to have a separate set of repository from which to update the various packages, to allow for pre-testing of package upgrades before they reach the end users. This, for example, would allow for the creation of alternate packages (outside of the Ubuntu main repositories) that guarantee the functionality of the various hardware part even if the upstream driver changes (like it recently happened with the inclusion of the new Atheros driver line in the kernel, that complicated the upgrade process for netbooks with this kind of hardware chipset). The cost and complexity of this activity is actually fairly low, requiring mainly bandwidth and storage (something that in the time of Amazon and cloud computing has a much lower impact) and limited human intervention.

The next variable is social acceptance, and is much more nuanced and difficult to assess; it also changes in a significant way from country to country, so it is more difficult for me to provide simple indications. One aspect that we found quite effective is the addition, on the side of the machine, of a simple hologram (similar to that offered by proprietary software vendor) to indicate a legitimate origin of the software. We found that a significant percentage of potential users looked actually in the back or the side of the machine to see if such a feature was present, fearing that the machine could possibly be loaded with pirated software. Another important aspect is related to the message that is correlated to the acquisition: one common error is to mark the machine as “the lowest cost”, a fact that provides two negative messages: the fact that the machine is somehow for “poors”, and the fact that value (a complex, multidimensional variable) is collapsed only on price, making it difficult to provide the message that the machine is really more about “value for money” than “money”. This is similar to how Toyota invaded the US car market, by focusing both on low cost and quality, and making sure that value was perceived in every moment of the transaction, from when the potential customer entered the show room to when the car was bought. In fact, it would be better to have a combined pricing that is slightly higher than the lowest possible price, to make sure that there is a psychological “anchoring”.

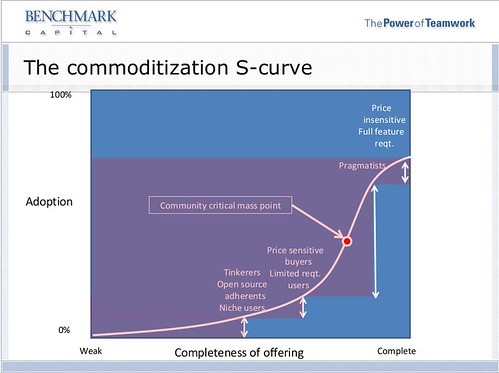

While price sensitive users are, along with “enthusiasts”, those that up to now drove the adoption of Linux on the desktop, it is necessary to extend this market to the more general population; this means that purely “price-based” approaches are not effective anymore.

As for the last aspect, facilitating conditions, the main hurdle perceived is the lack of immediate assistance by peers (something that is nearly guaranteed with Windows, thanks to the large installed base). So, a feature that we suggested is the addition of an “instant chat” icon on the desktop to ask for help, and brings back a set of web pages with some of the most commonly asked questions and links to online fora. The real need for such a feature is somehow reduced by the fact that the hardware is preintegrated and that pre-testing is performed before package update, but is a powerful psychological reassurance, and should receive a central point in the desktop. Equally important the inclusion of non-electronic documentation, that allows for easy “browsing” before the beginning of a computing session. A very good example is the linux starter pack, an introductory magazine-like guide that can be considered as an example.

We discovered that plain, well built Linux desktops are generally well accepted, with limited difficulties; most users after 4weeks are proficient and generally happy of their new user environment.